Multimodal Biometric Security in the Age of Deepfakes

As deepfake technology advances and identity fraud becomes increasingly sophisticated, traditional single-factor authentication methods are no longer sufficient. This article explores how multimodal biometric security, specifically the fusion of facial and iris recognition supported by Presentation Attack Detection (PAD), provides a robust defense against AI-driven spoofing attacks.

By: Mohammed Murad, Chief Revenue Officer, Iris ID

By combining the most convenient biometric, facial recognition, with the most accurate biometric, iris recognition, organizations can ensure one trusted identity and achieve high confidence in identity verification without sacrificing privacy, scalability, or user convenience. In a world where both digital and physical identities are under constant threat, fused multimodal biometrics are emerging as a critical foundation for trusted, future-ready identity systems.

Why Identity Must Be Verified, Not Assumed

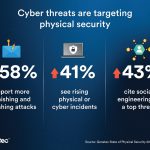

Deepfake technology has moved rapidly from novelty to a normalized threat. What once required advanced expertise can now be easily executed with commonly available tech and a small number of images compiled from social media. As a result, identity fraud is accelerating across both digital and physical infrastructure, targeting financial services, access control systems, border security, data centers, and enterprise environments.

The implication is clear: identity cannot be inferred from appearance or credentials alone. It must be a trusted identity that has been verified and secured, and that uses protections specifically designed for an AI-driven threat landscape.

At the core of this shift is multimodal biometric security, the use of two or more biometric traits, most commonly iris and face, enabled by PAD. This approach aligns with guidance from the National Institute of Standards and Technology (NIST), which emphasizes liveness detection and multi-factor strategies to avoid biometric presentation attacks and identity spoofing.

Trust Compromised: Deepfakes at the Center

Deepfakes enable presentation attacks that exploit a fundamental weakness of single-mode biometric systems. Facial recognition alone, particularly when operating in visible light, can be vulnerable to high-quality images, replayed videos, or AI-generated likenesses. This compromise of trust is not limited to digital onboarding or remote identity verification. Physical access points, such as doors, gates, kiosks, and checkpoints, are all equally exposed. When identity is reduced to a card, a PIN, or a single biometric factor, attackers need only defeat one control to succeed.

Presentation Attack Detection (PAD) Must Be Mandatory

PAD cannot be treated as an optional enhancement; it must be a prerequisite for any modern biometric security system. NIST SP 800-63 and ISO/IEC 30107 define PAD as the automated process of determining whether a biometric sample is from a live, legitimate subject or a presentation attack. In practice, PAD acts as the first line of defense against spoofing attempts, detecting artifacts that reveal non-live samples such as printed images, replayed videos, synthetic masks, or AI-generated faces.

PAD cannot be treated as an optional enhancement; it must be a prerequisite for any modern biometric security system. NIST SP 800-63 and ISO/IEC 30107 define PAD as the automated process of determining whether a biometric sample is from a live, legitimate subject or a presentation attack. In practice, PAD acts as the first line of defense against spoofing attempts, detecting artifacts that reveal non-live samples such as printed images, replayed videos, synthetic masks, or AI-generated faces.

In high security environments, the consequences of failure are severe. A single false acceptance in a secure data center can result in operational disruption, financial loss, and regulatory scrutiny. PAD mitigates these outcomes by stopping attacks before matching occurs, preserving system integrity and public trust, and maintaining a single trusted identity.

Iris Recognition Takes PAD Head-On

Among biometric modalities, iris recognition offers inherent resistance to deepfake attacks. Captured using near infrared (NIR) illumination, iris systems analyze highly detailed internal patterns that cannot be reproduced by printed images or standard digital displays. This technical advantage is well documented in biometric performance evaluations conducted under ISO/IEC standards. These characteristics explain why iris recognition has been adopted in demanding use cases such as border control and secure data center environments, where proving identity outweighs convenience alone.

Why Multimodal Biometrics Are the Key

The most effective response to deepfakes is not choosing between facial or iris recognition but fusing both. Multimodal fusion systems require attackers to simultaneously defeat two fundamentally different biometric technologies: one operating in visible light and the other in near-infrared (NIR). While a deepfake may be capable of deceiving a conventional camera, replicating the live physical characteristics of the iris under NIR illumination presents a far more difficult challenge.

This fusion approach delivers more than enhanced security. Facial recognition provides speed, familiarity, and ease of use, while iris recognition contributes exceptional accuracy and strong resistance to spoofing. By combining the most convenient biometric with the most accurate biometric, multimodal systems create a balanced authentication experience.

Privacy, Transparency, and Compliance

As biometric adoption grows, so do concerns around privacy, security, and data protection. Effective multimodal systems are designed with privacy by default, incorporating encrypted biometric templates, secure storage architectures, and clear separation between identity data and personally identifiable information. These practices align with GDPR principles and ISO information security frameworks such as ISO/IEC 27001.

As biometric adoption grows, so do concerns around privacy, security, and data protection. Effective multimodal systems are designed with privacy by default, incorporating encrypted biometric templates, secure storage architectures, and clear separation between identity data and personally identifiable information. These practices align with GDPR principles and ISO information security frameworks such as ISO/IEC 27001.

Independent third-party testing also plays a critical role in establishing trust. iBeta Quality Assurance, an accredited biometric testing laboratory, evaluates biometric systems against ISO PAD standards, providing objective validation that systems perform as claimed under real-world attack scenarios.

Equally important is transparency. Users are more likely to trust biometric systems when they understand how data is collected, used, and protected, and when enrollment is based on informed consent. Security that ignores privacy ultimately undermines itself.

Fused Biometrics

Deepfakes are not a passing trend; they represent a persistent and escalating security threat. As AI continues to advance, identity systems must assume that images, videos, and credentials can be forged. Fused multimodal biometrics, reinforced by PAD and validated against NIST, ISO, and iBeta standards, provide a strategic and resilient response that verifies the person, not the artifact.

The next generation of biometric security will be driven by intelligent systems that integrate multiple modalities, continuously verify liveness, and uphold privacy while delivering uncompromising protection. Organizations that act now will not only reduce risk but also help restore trust in identity itself across both physical and digital applications, while driving home the importance of a single trusted identity.